To Do or Not To Do

Decision Making

At their most basic level, programs are written to accomplish simple sequences of behavior. For example, you might want your robot to drive forward and also make some turns to reach a destination. But, what if you want your robot to wait for the right time to start driving forward and complete its route? That would require programming with conditional statements. You would use a conditional statement to define what the "right time to start" is within your project. Maybe the "right time" is after a button is pressed or when a sensor detects a specific level and then it starts driving. When you watch the robot's behavior, it will seem like it is deciding when to start driving but it's because you set the condition for when driving should start.

Conditional statements are powerful programming statements that use a boolean (TRUE or FALSE) condition. Using the same example scenario as above, you could program your robot to repeatedly check if its brain screen is pressed and drive forward when it is. The conditional statement in that project may read something similar to, "If the screen detects that it is pressed (TRUE), run the driving sequence." This statement does not mention any behavior if the condition is FALSE (the screen is not pressed) so the robot takes no action when FALSE. Conditional statements allow you to develop projects that have the robot behave differently depending on what it senses.

Conditional statements are powerful programming statements that use a boolean (TRUE or FALSE) condition. Using the same example scenario as above, you could program your robot to repeatedly check if its brain screen is pressed and drive forward when it is. The conditional statement in that project may read something similar to, "If the screen detects that it is pressed (TRUE), run the driving sequence." This statement does not mention any behavior if the condition is FALSE (the screen is not pressed) so the robot takes no action when FALSE. Conditional statements allow you to develop projects that have the robot behave differently depending on what it senses.

Creating a Stop Button

Stop or Drive

Editing the Brain Screen

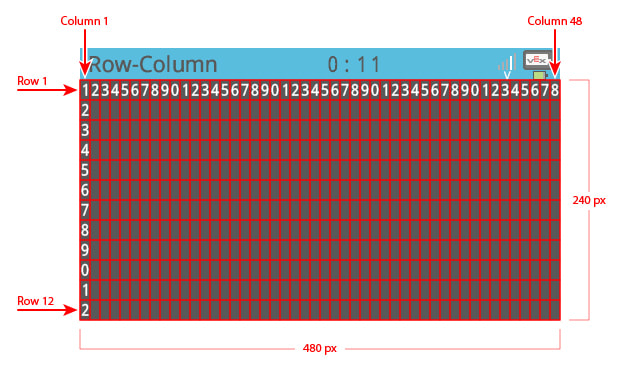

X - value = Left and Right pixels (1 - 480)

Y - value = Up and Down pixels (1 - 240)

Center of screen would be (240 , 120) where x = 240 and y - 120

Y - value = Up and Down pixels (1 - 240)

Center of screen would be (240 , 120) where x = 240 and y - 120

Left Or Right

User Interfaces

The buttons you created on the brain's screen are the beginning of a basic Graphical User Interface (GUI). There are other types of User Interfaces (UIs), but we will focus on GUIs because they are the type we use most.

A UI is a space that allows the user to interact with a computer system (or machine). When you programmed the buttons on the brain's screen, you gave users a way to interact with the Clawbot so they could make it stop or turn left or right. When you interact with a touchscreen on one of your devices (tablet, smartphone, smartwatch), those screens are often the only interface you have. Maybe your device has volume or power buttons as well but you mainly interact with the screen.

After programming your own buttons on the brain's screen, you should have a better sense of how a touchscreen might be programmed to detect which icon or button you want to select. Of course, there are more sophisticated ways of programming those features that professionals use instead of hard programming exactly where a button should be. Professional programs for GUIs are more adaptive to moving buttons and icons and other variables, but they share some of the same underlying principles.

Those principles form the foundation of the User Experience (UX) while using a UI. The User Experience is how well the interface lets me, as the user, do what I'm trying to do. Is the interface working as I expect it to? Is it responsive to what I'm trying to communicate with my presses? Is it organized well, or can buttons/icons/menus be moved around to make it easier? What does the interface look like in general? Is it pleasing to look at and does it make me want to use it more often? When a UI is still being developed and undergoing iterations, the developers collect data on what works as planned and what needs to be fixed or enhanced. That data then informs the next round of iterative design. Some of the UX changes recommended occur before the release of the device. But, the device might also be sold as is and those changes are made later before the next version is offered to the public consumer.

A UI is a space that allows the user to interact with a computer system (or machine). When you programmed the buttons on the brain's screen, you gave users a way to interact with the Clawbot so they could make it stop or turn left or right. When you interact with a touchscreen on one of your devices (tablet, smartphone, smartwatch), those screens are often the only interface you have. Maybe your device has volume or power buttons as well but you mainly interact with the screen.

After programming your own buttons on the brain's screen, you should have a better sense of how a touchscreen might be programmed to detect which icon or button you want to select. Of course, there are more sophisticated ways of programming those features that professionals use instead of hard programming exactly where a button should be. Professional programs for GUIs are more adaptive to moving buttons and icons and other variables, but they share some of the same underlying principles.

Those principles form the foundation of the User Experience (UX) while using a UI. The User Experience is how well the interface lets me, as the user, do what I'm trying to do. Is the interface working as I expect it to? Is it responsive to what I'm trying to communicate with my presses? Is it organized well, or can buttons/icons/menus be moved around to make it easier? What does the interface look like in general? Is it pleasing to look at and does it make me want to use it more often? When a UI is still being developed and undergoing iterations, the developers collect data on what works as planned and what needs to be fixed or enhanced. That data then informs the next round of iterative design. Some of the UX changes recommended occur before the release of the device. But, the device might also be sold as is and those changes are made later before the next version is offered to the public consumer.

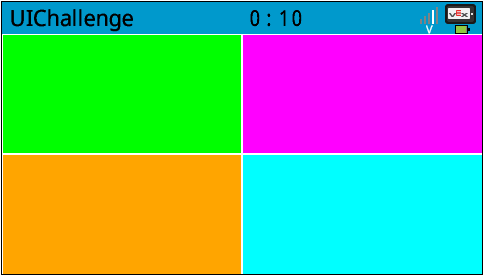

The User Interface Challenge

In the User Interface Challenge, you will program the Clawbot so that a user can press the brain's screen to control the arm and claw motors. Then the four buttons on the screen will be used to pick up and replace a variety of ten objects. This challenge does not require the Clawbot to drive or turn. The objects are picked up and then replaced to the same spot on the table or floor.

Rules:

When complete, screenshot your code and paste it into the doc "To Do or Not To Do" and turn in the assignment.

- Before you begin programming, write your pseudocode in the "To Do or Not To Do" Google Doc.

- Each of the four buttons must only do one of the four actions: open the claw, close the claw, lift the arm, or lower the arm.

- Using the Controller is not allowed.

- Each Clawbot will need to lift and replace different objects.

- The object needs to be lifted higher than the arm's motor before it is replaced on the table.

When complete, screenshot your code and paste it into the doc "To Do or Not To Do" and turn in the assignment.